Topic modeling of newspapers

As the Covid-19 pandemic affects so many lives, it has been imperative for governments, individuals and businesses to track the way it has affected society. They need to foresee the consequences of the disease and lockdown measures and adapt accordingly. Tracking discussions that take place in media and news websites is a way to monitor the current situation and detect the socio-economic effects of Covid-19 and track if different regions are going back to the normal life or moving toward a new normal.

This work shows how news content has changed in a duration in which the outbreak erupted in the UK, and how the news has evolved with rising Covid-19 cases and restrictions imposed on the society by the UK government. The analysis helps understand the tone and focus of media during a certain period. It also demonstrates how natural language processing can be used to digest and discern trends from different sources of information.

Code Available on Github

Our Method

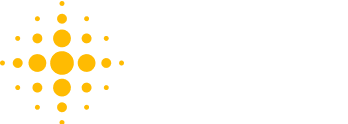

To understand better how news has evolved with the outbreak of Covid-19, we defined society-related indicators such as the number of publications of different topics and their sentiment and monitored them over time. For instance, we looked into the diversity of topics discussed in news and how their distribution and sentiment have changed over time. For our analysis, we used NLP approaches of topic modelling and sentiment analysis following the NLP workflow shown below.

In the NLP workflow, from left to right, first, we ingest data obtained from Socialgist containing UK News Articles. We also obtained the Oxford University’s Government Response Tracker [1] such as policies in containment and closure, economic policies and income supports, and health system policies. We also added the confirmed Covid-19 cases in the UK to analyze the trend of topics along with the trend of government measures and the spread of the disease.

The result is a clean dataset ready for topic modelling and sentiment analysis. Finally, we created plots to visualize the evolution of news content over time.

NLP workflow

For this analysis, we focused on the following UK-based news providers from 1st of January till the end of May 2020:

The first two are for analyzing news that target the general public and cover a variety of topics. Also, we analyzed financial news to give particular attention to financial topics as we know businesses have been widely affected by Covid-19.

In this blog post, we share our topic modelling results. You can continue reading our sentiment analysis work at “Sentiment analysis of newspapers”. Additionally, all the code for this analysis is made publically available on GitHub.

Results of Topic Modeling

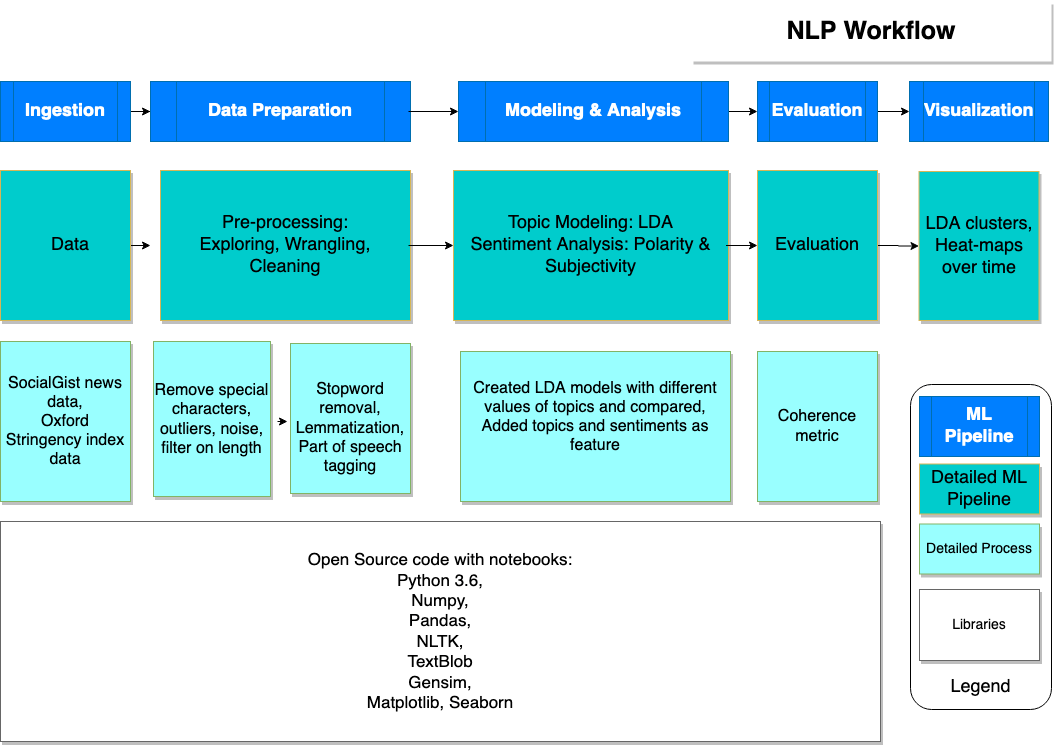

Topic modelling is an unsupervised learning method in natural language processing where a collection of textual content is clustered into topics. One of the well-known algorithms is LDA (latent Dirichlet allocation) that discovers abstract topics by calculating the distribution of words per topic and distribution of topics per document. We used Gensim library to discover topics and pyLDAviz to visualize them.

This is an example of an LDA plot where ten topics are clustered. On the left, the clusters are shown which their size indicates the marginal topic distribution. On the right, the most important words of a topic are shown with their frequency measure within that topic (red bars) versus their overall frequency in the entire corpus (blue bars).

We trained and analyzed the outcome of three LDA models:

- Coarse-grained topic modelling on Metro and TheSun news articles to discover major topics and their trends

- Fine-grained:

- topic modelling on the same set of articles to get insight into the sub-topics and their evolution through time

- topic modelling on Bloomberg and MarketWatch to get a better understanding of financial topics and their changes through the Covid-19 period.

Coarse grained topics

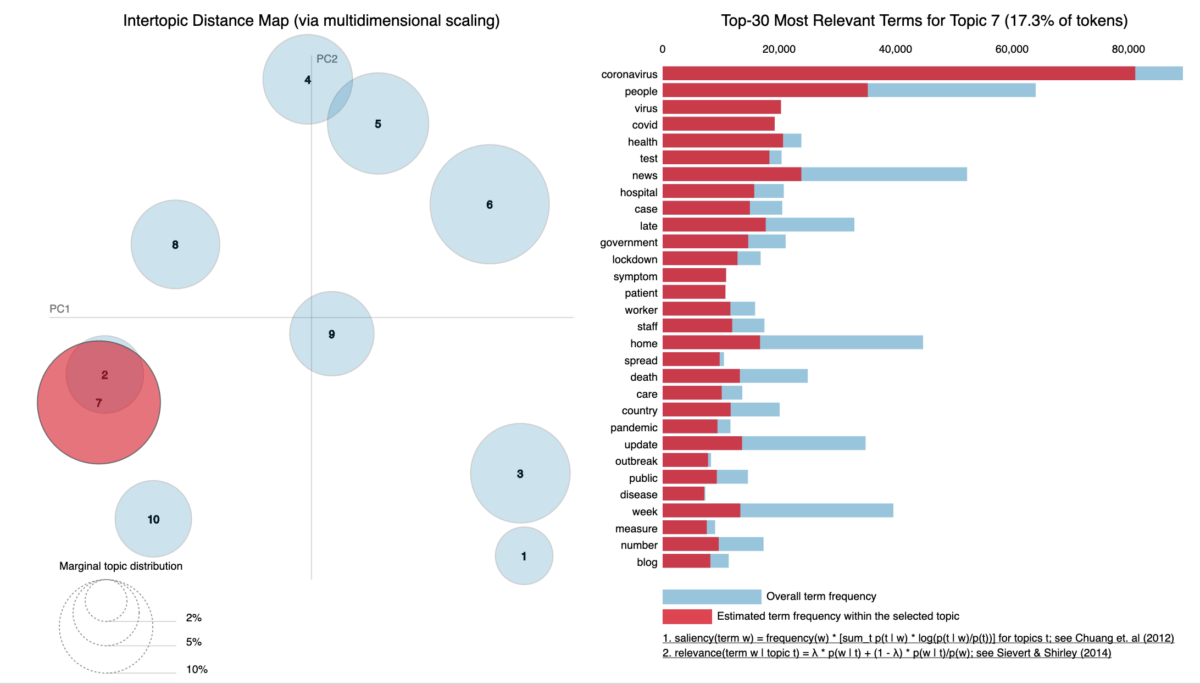

As we can see in the plot below, with the outbreak of Covid-19 in the UK, articles about ‘COVID’ emerged in media content and as the Covid-19 cases decreased, so as the number of publications. We already expected this trend however, it is interesting to see how other topics have been affected. For instance, the number of publications about ‘Business’ has increased and ‘Crime’ has decreased with the outbreak of Covid-19. Also, News on ‘Politics’ and ‘Entertainment’ is not as much published as before Covid-19.

Fine-grained topics

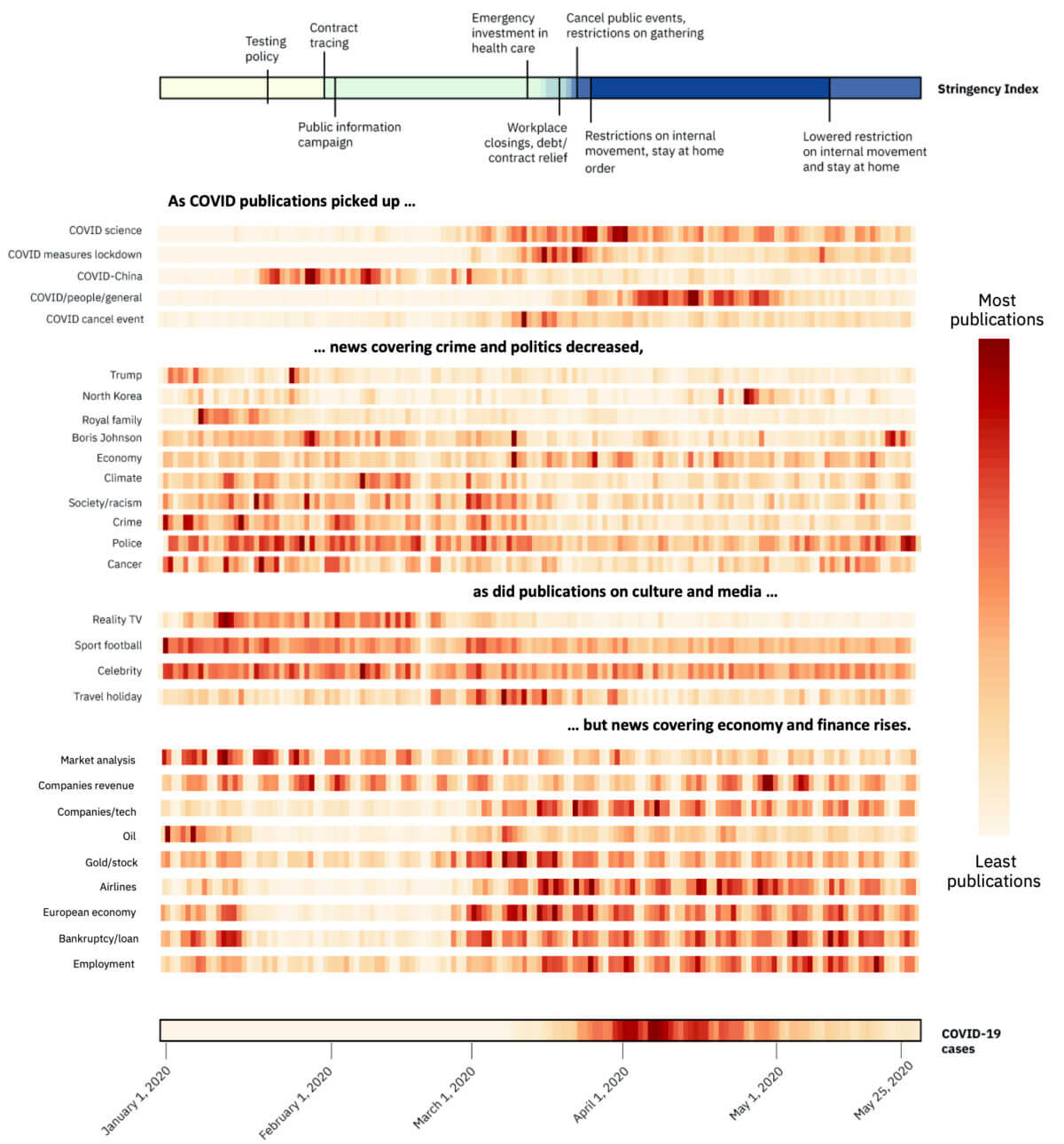

The plot below shows the result of fine-grained topic modelling. First, we show the specific topics around COVID such as articles around science and disease itself, government restrictions, Covid-19 in China that happened before the outbreak in Europe, as well as events that got cancelled as restrictions were imposed.

Then topics around politics, climate, society and crime are shown followed by culture and entertainment. In the end, we show the result of topic modelling on financial news articles from Bloomberg and MarketWatch. We can see clearly that there have been many discussions around airlines, employment, and bankruptcy during the Covid-19 period and the time restrictions were imposed by governments.

Code Available on Github

Main Takeaway

Topic modelling for news content analysis is a powerful exploratory method to get insight into the diversity of topics in the focus of media that implies indirectly the focus of our society. Visualizing heatmaps of distribution of articles over time shows us how the trend of topics has been changing during the Covid-19 period.

Disclaimer: This information can be used for educational and research use. Please note that this analysis is made on a subset of news content. The authors do not recommend generalising the results and draw conclusions for decision-making on these sources only.

Authors:

Mehrnoosh Vahdat is Data Scientist with IBM Data Science & AI Elite team where she specializes in Data Science, Analytics platforms, and Machine Learning solutions.

Vincent Nelis is Senior Data Scientist with IBM Data Science & AI Elite team where he specializes in Data Science, Analytics platforms, and Machine Learning solutions.

Special thanks to Erika Agostinelli, Anthony Ayanwale, Rachael Dottle, Swetha Batta, Alexander Lang, and Mara Pometti who helped us in this work.

We are a team of data scientists from IBM’s Data Science & AI Elite Team, IBM’s Cloud Pak Acceleration Team, and Rolls-Royce’s R2 Data Labs working on Regional Risk-Pulse Index: forecasting and simulation within Emergent Alliance. Have a look at our challenge statement!

[1] Hale, Thomas, Sam Webster, Anna Petherick, Toby Phillips, and Beatriz Kira (2020). Oxford COVID-19 Government Response Tracker, Blavatnik School of Government. Data use policy: Creative Commons Attribution CC BY standard.